The festive season is a time to eat, drink and be merry, but it can

Continue reading »

The festive season is a time to eat, drink and be merry, but it can

Continue reading »

A MAJOR wine retailer is closing its more than 200 stores for an extra day

Continue reading »

A FEMALE mechanic has opened up about her career choice – and hit back at

Continue reading »

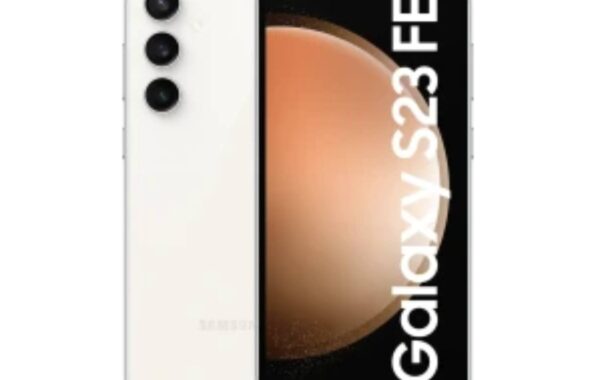

MOBILES.CO.UK is offering two freebies with a brand-new Android smartphone on the iD Mobile network.

Continue reading »

THE UK is becoming a world-leader when it comes to wine – and a small

Continue reading »

IMAGINE forking out £30 for a posh secret Santa pressie only to then receive an

Continue reading »

Sleigh, what? From carving radishes to building giant goats – 10 weird and wonderful Christmas

Continue reading »

I love Greta Gerwig as an artist – an underrated actress, a gifted writer and

Continue reading »

With Christmas just a week away, UK shoppers are on the hunt

Continue reading »

Gardening tips: Four homemade hacks to kill garden weeds No one wants

Continue reading »

The festive season is a time to eat, drink and be merry,

Continue reading »

A MAJOR wine retailer is closing its more than 200 stores for

Continue reading »

MOBILES.CO.UK is offering two freebies with a brand-new Android smartphone on the

Continue reading »

A MAJOR energy supplier has announced a package to wipe debts for

Continue reading »